A Linux Logbook

This was a logbook that I created in an attempt to begin the documentation process of understanding how to spoof a user agent and why that event might need to be done. A problem was encountered when I had tried to automate the process of downloading a collection of files that were placed on the internet. My request to download them came from a utility wget which is not a normal web browser but a program that handles requests just like web browsers do.

I kept running into an error in that the download request triggered an application residing in the web browser that wanted to check my humanity. Because this is a book publisher's site, no doubt it was to protect their copyright. But the same type of thing could come up in any number of cases where a request is made but it turns out the programmer doesn't like "how" you are accessing the file.

So this is an exploration of how one might begin to investigate changing a user agent in wget to match the user-agent in Chrome.

Problem definition

Using the command wget to download a pdf document.

The command kept failing with the following output:

Will not apply HSTS. The HSTS database must be a regular and non-world-writable file.

ERROR: could not open HSTS store at '/home/ddarden/.wget-hsts'. HSTS will be disabled.

--2020-05-27 10:26:48-- https://link.springer.com/content/pdf/10.1007%2F978-94-007-6863-5.pdf

Resolving link.springer.com (link.springer.com)... 151.101.192.95, 151.101.128.95, 151.101.0.95, ...

Connecting to link.springer.com (link.springer.com)|151.101.192.95|:443... connected.

HTTP request sent, awaiting response... 200 OK

Length: unspecified [text/html]

Saving to: ‘Group-Theory-Applied-to-Chemistry.pdf’

Group-Theory-Applied-to-Chemi [ <=> ] 13.54K --.-KB/s in 0.03s

2020-05-27 10:26:48 (504 KB/s) - ‘Group-Theory-Applied-to-Chemistry.pdf’ saved [13860]

So we can see here that it appeared that the file was saved and we get a confirmation that we have it. Wget interpreted our command correctly and saved the output it received to the file we told it to in the '-o' switch but notice the file size. It is very small.

Work to resolve the issue

Attempted to open the PDF file in a viewer failed. Invalid file.

Opened the file with vi:

meta charset="utf-8">

<meta http-equiv="X-UA-Compatible" content="IE=Edge,chrome=1"/>

<meta name="viewport" content="width=device-width,initial-scale=1.0,maximum-scale=2.5,user-scalable=yes">

<title>Security check | SpringerLink</title>

<link rel="shortcut icon" href="/springerlink-static/409335052/images/favicon/favicon.ico">

<link rel="icon" sizes="16x16 32x32 48x48" href="/springerlink-static/409335052/images/favicon/favicon.ico">

<link rel="icon" sizes="16x16" type="image/png" href="/springerlink-static

It is html output.

So this looks just like regular HTML output. But notice it's nothing more than a link to the favicon.ico file and a "Security Check". My request to grab the file is getting interpreted as an automated bot. But how? I went into Chrome and downloaded the file, and the download completed successfully.

Idea: Change the user agent

The theory is that if I change the user agent that I am passing over with wget the system will interpret my request properly and will give me the file that I am asking for.

Using chomre:\ settings to display the user agent.

Look under Version for User Agent and copy the string:

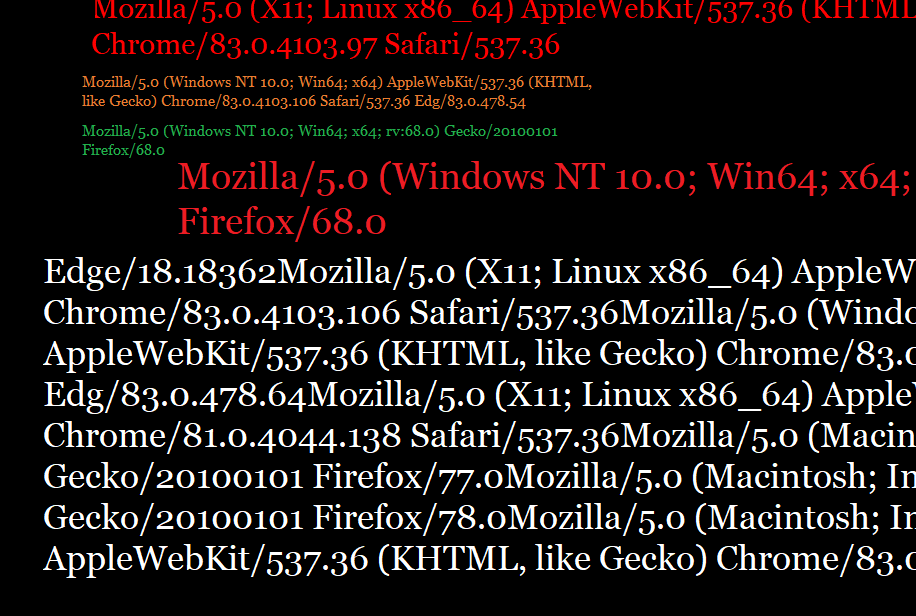

Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/83.0.4103.61 Safari/537.36

How to pass user-agent string to wget.

man wget | grep 'user'

Indicates that there is a user-agent command switch.

--user-agent=""

Using --user-agent="" gives the same error when dowloading the PDF file.

Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/83.0.4103.61 Safari/537.36

I assigned this string to a variable and sent it through wget. It is still not working.

When I enclose it in quotes it literally evaluates every parameter as a website. When I leave the quotes off it still errors out but I have no way to know that it is actually sending the user-agent string properly.

Still getting a robot alert message when I go back to my browser from trying wtih the wget command but I am able to download the PDF in the browser after I go through the robot check.

In order to check both how Chrome browser is sending the command and how wget is, try checking headers.

Set Other Request Headers

Real web browsers will have a whole host of headers set, any of which can be checked by careful websites to block your web scraper. In order to make your scraper appear to be a real browser, you can navigate to https://httpbin.org/anything, and simply copy the headers that you see there (they are the headers that your current web browser is using). wgetwwwwwwwwex

Source: https://www.scraperapi.com/blog/5-tips-for-web-scraping

So what are next steps?

This problem is not complete. However the next steps that I want to take in the solution are the following:

- Check to see what other headers WGET as well as Chrome are sending over by using the website in the article.

- Attempt to set the User Agent to the correct string on wget and test it.

- If that doesn't work, attempt to set some headers as per the article and see if I can get anythig different.

It may be if I continued this out that I will need to modify X-headers sent by the browser. Unlike a browser, wget is not an interactive process. Therefore, being hit with a humanity check is a very frustrating process especially when we are attempting to use automation to solve problems. It's also an unrecoverable error.

Feel free to keep going in this challenge to find the appropriate user agent and or X-header needed to solve the problem.